The GoLaxy Papers: Blueprints for Industrial-Scale Cognitive Warfare

A 399-page internal leak from GoLaxy, a Chinese Academy of Sciences spinoff, reveals a fully automated AI influence system integrating DeepSeek. The database holds 23 million Taiwanese household records and dossiers on 2,000+ U.S. political figures.

A 399-page internal document leak from GoLaxy, a commercial spinoff of the Chinese Academy of Sciences, reveals a fully automated AI-driven influence operation system capable of profiling individuals, manufacturing synthetic personas, and deploying targeted content at scale. The system integrates DeepSeek, holds 23 million Taiwanese household records, and has been deployed across Hong Kong, Taiwan's elections, and overseas Chinese communities.

The 399-page document leak came from the most mundane of motives. The final two pages are a disgruntled employee's complaint about pay—senior developers capped at 30,000 RMB per month, master's-level data analysts earning under 20,000. The preceding 397 pages describe something far less mundane: a fully automated, AI-driven influence operation system built to profile individuals, generate synthetic personas, and deploy targeted content at scale. The clients include military and civilian intelligence agencies. The targets span Taiwan, the United States, and Hong Kong.

The company is GoLaxy (中科天玑), founded in 2010 as a commercial spinoff of the Chinese Academy of Sciences' Institute of Computing Technology. Researchers Brett J. Goldstein and Brett V. Benson at Vanderbilt University's Institute of National Security obtained the documents. The New York Times first reported on them on August 5, 2025. Vanderbilt made the full archive publicly available the following September. In March 2026, Taiwan's Doublethink Lab published a detailed Chinese-language analysis that triggered a second wave of coverage across the Chinese-speaking world.

When contacted by reporters, GoLaxy deleted the government relations pages from its website. The company denied most of the documents' contents without providing counter-evidence. No independent institution has identified the documents as fabricated.

What the System Does

The documents describe what GoLaxy calls an "intelligent public opinion system"—a full pipeline from surveillance to deployment. It monitors social media platforms to identify politically salient topics, builds psychological profiles of target individuals, uses generative AI to produce customized content, and distributes it through networks of synthetic personas.

One name in the technical specifications deserves particular attention: DeepSeek. The Chinese open-source large language model attracted global notice in early 2025 for its reasoning capabilities. The documents show it was integrated into GoLaxy's content generation pipeline shortly after its public release. Open-source means anyone can download, fine-tune, and deploy it—including for the purpose of manufacturing fake accounts designed to pass as real neighbors.

The system can generate over 100 virtual characters operating in Mandarin, Taiwanese Hokkien, and English across more than 10 simulated scenarios. These personas are designed to be "subtle, authentic, and human-like," with real-time adaptive capabilities.

The Scale of the Data

- 6.2 million news articles and social media posts from at least 10,000 Taiwan-related sources

- 5,000 targeted social media accounts

- 75 political parties · 1,478 companies · 13,000+ religious organizations · 24,000 civic groups

- 23 million Taiwanese household registration records (total population: ~23.4 million)

- U.S.: dossiers on 2,000+ political figures, including at least 117 Republican members of Congress

- Taiwan: 170 politicians profiled in detail (affiliations, addresses, education, religion)

The most consequential number is 23 million Taiwanese household registration records. Taiwan's population is approximately 23.4 million. The ratio speaks for itself: the database approaches comprehensive coverage of every resident on the island. This is not opinion monitoring. A closer analogy is population mapping.

On the U.S. side, the documents contain dossiers on more than 2,000 political figures and opinion leaders, including at least 117 Republican members of Congress, with records of their political positions, social connections, and influence metrics. In Taiwan, 170 politicians are profiled in detail—political affiliations, residential addresses, educational backgrounds, religious beliefs. Doublethink Lab's analysis additionally found that the system classifies Taiwanese political figures into four categories: "hardline," "friendly," "swing," and "objective," with at least 1,000 individuals per group.

Known Operations

The documents record operations across three theaters. During Hong Kong's 2020 National Security Law crisis, the system monitored Twitter accounts and suppressed opposing voices—behavior patterns consistent with what Western platforms later catalogued as "Spamouflage," including the telltale techniques of account-building through cat photos and celebrity content. Between 2022 and 2023, networks of Facebook accounts disguised as real users amplified pro-Zero COVID narratives on international platforms, targeting overseas Chinese communities. In late 2023, the system helped design pre-election messaging strategies against Taiwan's Democratic Progressive Party, with specific issue framing and audience segmentation plans documented in the files.

Doublethink Lab independently verified 7 accounts from the documents as linked to GoLaxy operations, with behavioral patterns matching the documented descriptions.

From Spamouflage to GoLaxy: A Qualitative Shift

Spamouflage has been identified by Meta and Twitter since 2019—high-volume bot accounts posting repetitive pro-Beijing content, mechanistic behavior patterns, relatively straightforward platform detection. That was a numbers game.

GoLaxy plays a different one. It trains AI on real human behavioral patterns to generate accounts statistically indistinguishable from authentic users, then deploys precision targeting based on individual psychological profiles. Not broadcasting a single message to everyone, but sending each target content crafted specifically for them.

Not an Outlier—An Industry

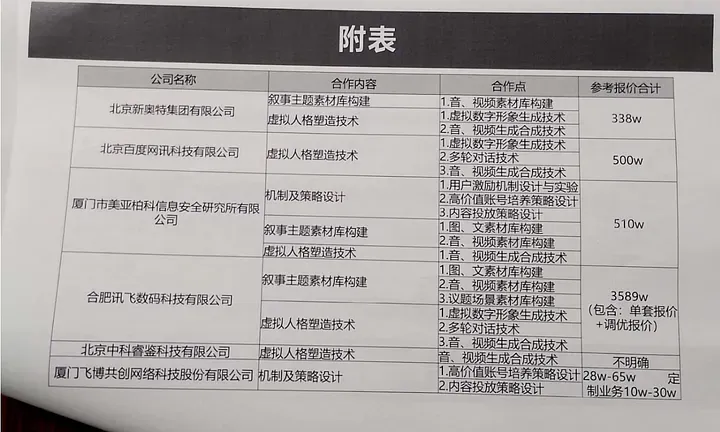

The documents include information about GoLaxy's partner and client networks, pointing to a commercial ecosystem built around influence operations. GoLaxy's capabilities are not one company's exceptional achievement. They are the natural product of an industry with market scale, growing under Beijing's "civil-military fusion" policy framework: state research institutes incubate commercial companies, commercial companies execute projects of national strategic value, and the corporate shell provides legal insulation and plausible deniability.

GoLaxy's business model is built on providing influence operation capabilities to clients with national strategic mandates. Strip that core function away, and the company has no reason to exist.

What's Being Missed

Public discussion has fixated on the fact that China conducts AI-driven influence operations. That stopped being news some time ago. The structural questions receiving insufficient attention are more uncomfortable.

DeepSeek's dual identity is one of them. It is a technically impressive open-source model. It is also a tool with documented use in manufacturing synthetic personas. The governance dilemma of open-source AI is made concrete here: permitting global developers to use a model means accepting that it will also be weaponized for influence operations. No international mechanism is currently attempting to resolve this contradiction.

The ceiling on detection technology is equally consequential. Current platform detection depends on identifying behavioral anomalies. When synthetic accounts are statistically indistinguishable from real users, the methodology itself fails. The next generation of detection requires not a better classifier but an entirely new authentication architecture—and no one is seriously building one.

There is also a less obvious detail: the time lag in how these documents reached Chinese-speaking audiences. The New York Times reported the story in August 2025, with thorough English-language coverage. It was not until March 2026—more than six months later—that Doublethink Lab's Chinese-language analysis brought the information to the broader Chinese-speaking public. Content most relevant to the world's largest Chinese-reading audience required the longest journey to reach them. That delay is itself a footnote on information asymmetry.

The industrialization of cognitive warfare does not require the adversary to first acknowledge its existence. The 399-page archive shows that the infrastructure has been built, tested, and deployed. The question is not whether this is happening, but whether democratic societies' defense mechanisms are prepared for an information environment in which an adversary can manufacture "neighbors" at commercial scale.